This article is not part of the Building Microsoft System Center Cloud series but we will use the new cluster to host our VMs in the cloud series.

In the previous article I did not let the Create Cluster Wizard to do automatic configuration of the storage because the storage was not available for the servers that I use but now it is available so now it is possible to configure all settings manualy.

Multipath I/O

We connect a disk here over two Fibre Channel ports so we have more than one physical path between the computer and the storage and therefore we need Multipath I/O (MPIO). With MPIO when one controller, port or switch fails, the operating system can route I/O through the remaining controller transparently to applications.

Install MPIO

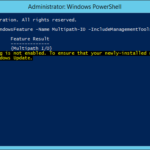

PowerShell: Install-WindowsFeature -Name Multipath-IO

How it looks like without MPIO?

- After the installation the MPIO is not activated yet.

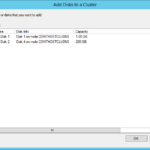

- We can see that there are two pairs of disks and the disks in the pairs are same (same capacity). That is mean that MPIO was not enabled yet.

Enable MPIO

- We have to add Hitachi Universal Storage OPEN-V.

- And after restart we can check the Disk Management that now we can see two disks and not four like before the MPIO installation.

- Now we can see only two hard disks. The first disk is our first LUN and the second disk is our second LUN. Both LUNs have two independet connections two the storage.

Cluster disks

Configure disks before you add them into the cluster

- Online all disks on all nodes (important step).

- Initialize disks on one node.

- There is absolutely no reason to use legacy MBR even for small disks that will not be bigger than 2 TB so we use GPT for all.

- Now it is possible to set the disks online and format them:

- Witness disk

- Size: At least 512 MB; I could not get smaller disk than 1 GB so I use 1 GB.

- Disk letters: None

- File system: NTFS

- Volume labels: Witness

- Cluster Shared Volume

- Disk letter: None

- File system: ReFS

- Since Windows Server 2012 R2 it is possible to use Resilient File System (ReFS) for Cluster Shared Volumes (CSV) so we use the new FS.

- Volume label: CSV0

- Witness disk

- You do not have to configure anything on the second node.

What is the name of the small disk? Quorum or Witness?

A lot of times I saw that people are setting label as Quorum. That is incorrect. Witness disk are giving one vote to our quorum so for example when network between two nodes is down the node that currently own the witness disk have the quorum (two votes in our case). In the case the witness disk is down the two nodes have still the quorum. From my point of view it is not good idea to name disk as Quorum because there is a difference between the quorum and the Witness disk.

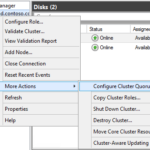

Add disks to the cluster

Cluster Quorum Settings – selection of Witness disk

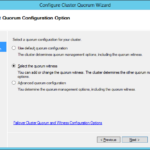

Configure Cluster Quorum Settings (select the Witness disks).

- Configure Cluster Quorum Settings…

- Select the quorum witness

- Configure a disk witness

- Select witness disk.

- The witness disk was added.

Check the Witness disk

- PowerShell

Move-ClusterGroup -Name 'Cluster Group' -Node SecondNode

Configure Cluster Shared Volumes

Check the new CSV

Set descriptive names for the storage

How it looks like without Multipath I/O?

Just for those who are interested…

- We are using Fibre Channel (FC) so it looks like fours disks that are directly connected to every node. In the fact there are two LUNs that are connected to each server by two paths using FC.

- Without MPIO we can see two pair of disks and it is possible to format one disk from the pair.

- After format and refresh the Disk Management console is showing that all disks contains a partition.

5 responses to “Implementing Windows Server 2012 R2 Hyper-V Failover Cluster – Part 4 – Cluster storage and quorum configuration”

Great articles. all steps helpful

Thx Vikram, I’m glad I could help.

Hi Rudolf i have some question, did we need to assign Quorum disk from storage to second node ? i mean the quorum disk will use shared lun from storage Thanks for help 🙂

Audhie 2016-08-02 10:04 No need of configuring the quorum disk in second node.

Hello, If I add a second FC Storage to the Cluster, have I make a second Whitness/Quorum disk? Thanking in advance!